Jimin Pi, Ph.D.

Hong Kong University of Science and Technology

Email: jpi (at) connect.ust.hk

Hong Kong University of Science and Technology

Email: jpi (at) connect.ust.hk

Publications:

Publication:

Publication:

Publication:

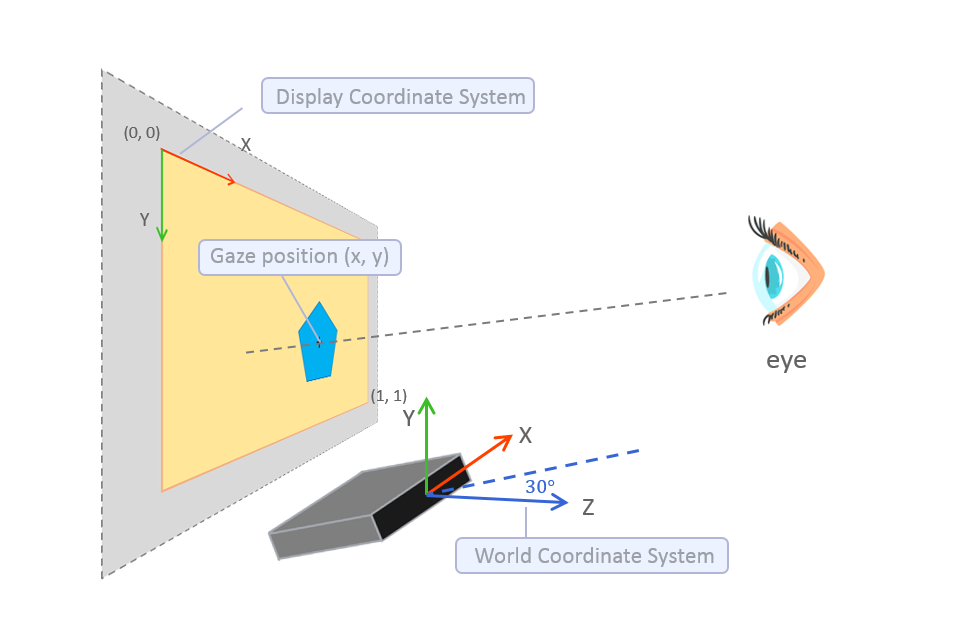

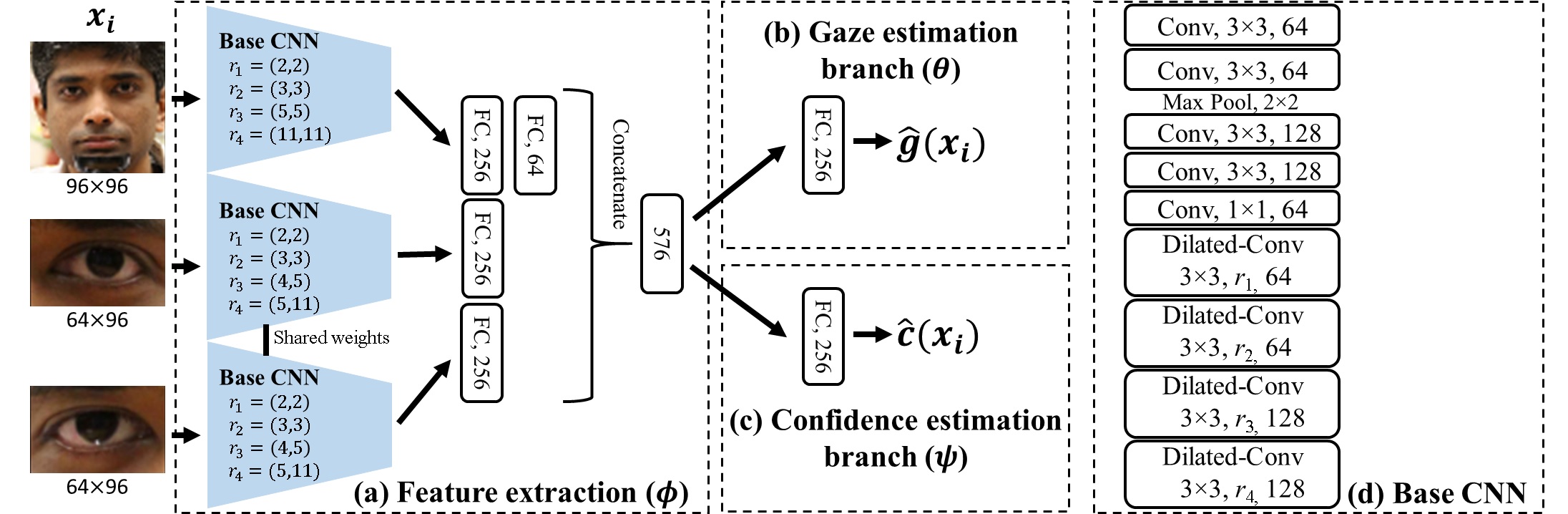

Unsupervised Outlier Detection in Appearance-Based Gaze Estimation.

Zhaokang Chen*, Didan Deng*, Jimin Pi*, and Bertram E. SHI. (*Equal contribution)

ICCV 2019 Workshop and Challenge on Real-World Recognition from Low-Quality Images and Videos.

Publication:

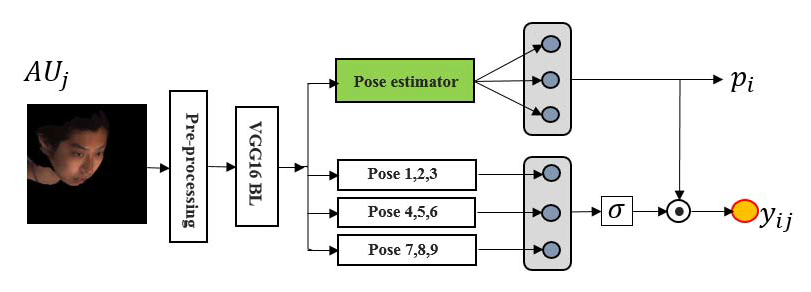

Pose-independent Facial Action Unit Intensity Regression Based on Multi-task Deep Transfer Learning.

Yuqian Zhou, Jimin Pi, and Bertram E. SHI.

IEEE International Conference on Automatic Face & Gesture Recognition (FG), 2017.

PDF

Last updated: October, 2019

Visitors